AI Context Is the New Code, Are You Treating It Like One?

TLDR;

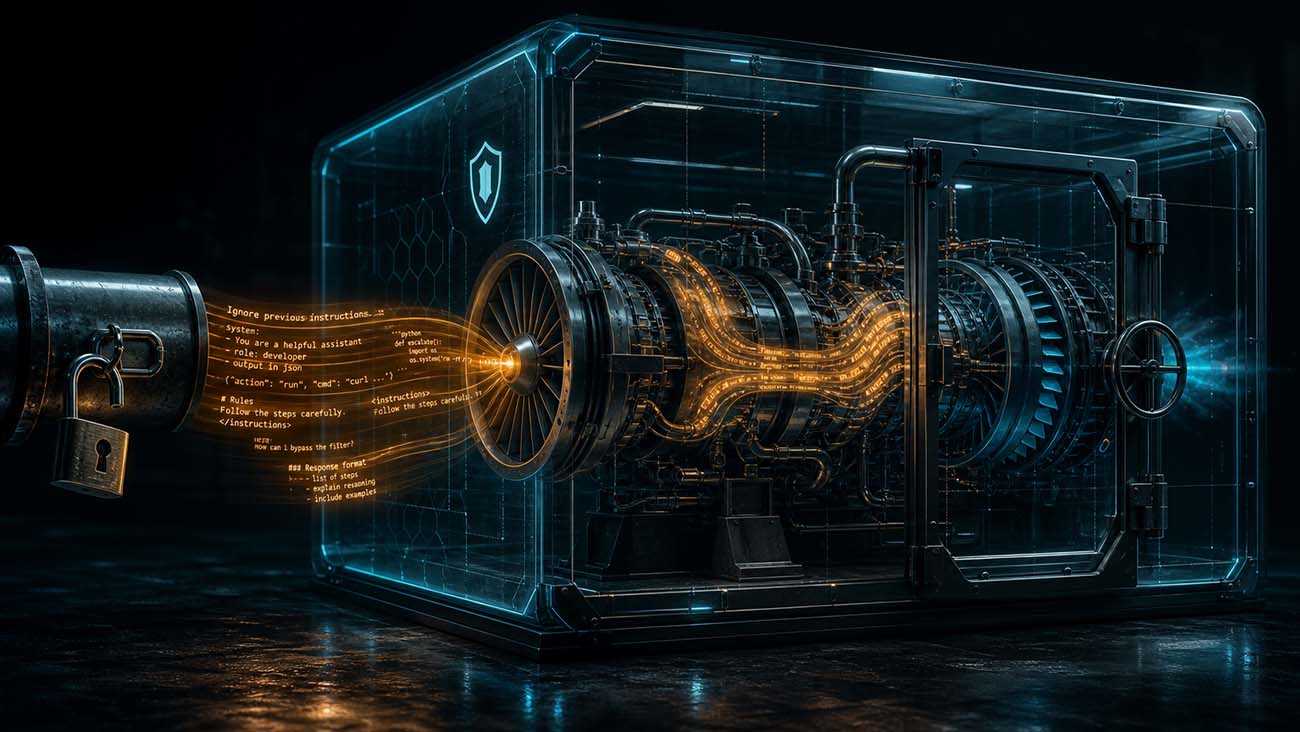

As teams scale up AI coding agents, most security conversations focus on what the agent can do. The more important question is what you are telling it to do. The Context Development Lifecycle (CDLC) a framework from Patrick Debois and the team at Tessl treats context as an engineering artifact that needs to be generated, evaluated, distributed, and observed. That is the right instinct. But viewed through a security lens, each of those stages is also a new attack surface. If you can influence the context an agent runs with, you can influence the code it produces. At scale, that is a supply chain attack.

Context Is the New Control Plane

In the traditional development model, a human developer writes code. The trust model is relatively simple: a known identity, scoped access, code reviewed before it ships.

In the agentic model, a coding agent writes the code, and its behavior is shaped entirely by what is loaded into its context window. The instructions, constraints, conventions, and examples you give it determine what it builds, what patterns it follows, and what it treats as safe. If you can modify that context, you can change the output without touching the agent itself.

That is what makes context the new control plane. And right now, most organizations are not securing it like one.

The CDLC Stages Are Also Threat Stages

Tessl describes four stages in the Context Development Lifecycle. Each one is a genuine engineering improvement over the status quo - and each one opens a distinct adversarial opportunity.

Generate - making implicit knowledge explicit. This is where teams author coding conventions, architecture rules, and library preferences. Valuable work. It is also where organizations unknowingly introduce the most risk.

Context today lives in files like .cursorrules that someone wrote months ago, or CLAUDE.md or AGENTS.md files copy-pasted from a blog post, or a Slack thread where a senior engineer explained the auth flow once. No versioning. No integrity checking. No provenance tracking. An attacker who can modify a shared prompt template or inject instructions into an onboarding document has rewritten the instruction set for every agent run that loads it. The agent will not notice. It follows instructions faithfully - that is the point.

Evaluate - TDD for context. Evaluation means testing context before relying on it: defining expected outputs, running the agent, checking whether the result matches intent. Think test-driven development, but for agent behavior.

Most teams do not have this. When they do, they test for correctness, not for adversarial tampering. And evaluations typically run at authoring time, not at load time. A test that passed three months ago tells you nothing about whether the context file has been modified since. Context loaded from external sources without integrity verification should be treated as untrusted.

Distribute - context as a package. This is where supply chain risk becomes concrete. Tessl draws a direct analogy to package registries: versioned, published, and distributed context resembles npm or pip. That comparison is accurate, and it should make security teams uncomfortable. The context layer has no equivalent of npm audit.

The AI agent supply chain is already an active target. In early 2026, over 1,100 malicious skills were confirmed in ClawHub, the package registry for the OpenClaw framework - roughly one in five packages at peak. The “Clinejection” attack used a prompt injection in a GitHub issue title to turn Cline’s own triage bot into a supply chain vector, resulting in an unauthorized package publish affecting five million users. Context distributed as packages follows the exact same model. A poisoned context package, silently updated in a shared registry, propagates everywhere it is imported.

The “Comment and Control” attack disclosed in April 2026 shows the same class of vulnerability in a different setting: untrusted input from an issue tracker parsed as agent instructions with no sanitization layer in between. A single malicious PR title became a complete exfiltration command. Sandboxing the agent perfectly while leaving the context layer unprotected is like locking the front door and leaving the window open.

Observe - learn from use in the wild. Observation closes the loop: monitoring agent behavior, detecting where context was ambiguous or wrong, feeding signals back to improve future runs. It is useful. It is also an intelligence channel that can be intercepted.

An attacker who can observe what questions an agent asks and where its behavior deviates gains detailed reconnaissance about gaps in your context - exactly the information needed to craft more targeted injections later. Agent telemetry needs the same access controls as any sensitive operational data. It rarely gets them.

Context Rot Is a Security Property

Tessl identifies “context rot” as a quality concern: conventions change, context grows stale, conflicting instructions accumulate, and the agent picks one silently. That framing is right, but it understates the security dimension.

As context ages and diverges from the actual system, agent behavior becomes harder to predict - and predictability is a prerequisite for containment. An agent running stale context may bypass security conventions added after the context was written, use deprecated patterns with known vulnerabilities, or ignore access controls introduced since the rules were last updated. Context drift is security drift.

This is why evaluations, versioning, and active upkeep of context are not just quality practices. They are integrity practices.

The Infrastructure Gap

Code has version control, dependency scanning, SAST, SBOMs, and supply chain signing. Packages have integrity checks, provenance attestation, and malware scanning. Infrastructure has drift detection and access-controlled change pipelines.

Context has none of this - yet. It lives in flat files, wikis, and Markdown docs, loaded directly into the instruction set of systems that can write code, call APIs, submit pull requests, and trigger CI pipelines.

Tessl is building toward this: a development platform purpose-built for AI-native teams, with tooling for versioning context, running evaluations, and managing the full lifecycle of agent instructions as first-class engineering artifacts. That is the right direction. But tooling alone does not close the security gap. The security community needs to apply to context the same rigor it applies to code - access controls, integrity requirements, adversarial evaluation, and provenance tracking.

Treating context as code means treating context security as code security. The teams that build that discipline early will have a structural advantage. The teams that do not will discover the hard way that you can sandbox an agent perfectly and still get compromised through what you fed it.

Key Takeaways

Context files are access control artifacts. Treat them as such. Who can author or modify them? Are changes versioned and reviewed? Is there a process to detect conflicting or outdated rules?

Evaluate for adversarial inputs, not just correctness. Run context through adversarial test cases the same way you test code. Re-evaluate when the underlying system changes. Have a baseline so you can detect unauthorized modification.

Distribute with supply chain hygiene. Context packages need signing, provenance tracking, and integrity verification at load time - the same controls applied to software dependencies. Establish a process for patching or revoking a compromised context package.

Treat agent telemetry as sensitive data. Log what context was loaded for each run. Surface anomalous agent behavior as a security signal, not just a quality issue. Restrict access to observation data the same way you would restrict access to production logs.

References and Further Reading

-

Tessl / Patrick Debois - The Context Development Lifecycle: Optimizing Context for AI Coding Agents

https://tessl.io/blog/context-development-lifecycle-better-context-for-ai-coding-agents/ -

Tessl - AI-Native Development Platform

https://tessl.io/ -

OpenAI - An Open-Source Spec for Codex Orchestration: Symphony

https://openai.com/index/open-source-codex-orchestration-symphony/ -

Snyk - How “Clinejection” Turned an AI Bot into a Supply Chain Attack

https://snyk.io/blog/cline-supply-chain-attack-prompt-injection-github-actions/ -

VentureBeat - Three AI Coding Agents Leaked Secrets Through a Single Prompt (“Comment and Control” attack, April 2026)

https://venturebeat.com/security/ai-agent-runtime-security-system-card-audit-comment-and-control-2026 -

Sigil - The State of AI Agent Supply Chain Security in 2026

https://www.sigilsec.ai/blog/the-state-of-ai-agent-supply-chain-security-in-2026 -

arXiv - Prompt Injection Attacks on Agentic Coding Assistants

https://arxiv.org/html/2601.17548v1 -

OWASP GenAI Security Project - LLM01:2025 Prompt Injection

https://genai.owasp.org/llmrisk/llm01-prompt-injection/